The future of user interfaces

The future of computing is changing drastically as new models of computing and new user interfaces are being created and updated at a rapid pace. On one hand, these innovative models, such as voice recognition, autonomous agents, and bots, are replacing traditional user interfaces. On the extreme opposite end of the spectrum, augmented reality will infiltrate new user interfaces, such as the innovation lead by Microsoft in holographic computing technologies, along with virtual reality platforms coming to market from Google and Facebook. In both cases, turning these ideas into practical trends will require overcoming some key technological limitations.

Voice

Voice recognition is one of the trends that is changing the way we use computing and applications. Apple’s Siri, Google Now, Microsoft Cortana and Amazon Echo, along with their smart agents, are prime examples of a clear wave moving toward the application of machine learning to voice and data. Companies like Apple, Google, and Baidu register above 95 percent accuracy for speech recognition, and are still improving. According to Andrew Ng, chief scientist at Baidu, 99 percent accuracy is the key milestone for speech recognition. Ng thus rightly predicts that 50 percent of web searches will be voice-powered by 2019.

Agents

The next natural step for this accurate voice recognition technology is the incorporation of a learning bot. This will be user-fed with continuous data about the user’s life, and therefore, able to assist with their tasks via voice recognition.

These new technologies will require voice recognition access, data access, and interoperability with connected assets. They will continually learn, access new data sources, and provide users with a significant amount of value. However, in order to address these great innovations, companies will need to face many and sometimes steep costs— these technologies will generate ever increasing numbers of API calls, which will require vast amounts of infrastructure and new levels of scale and management.

Many believe that voice represents the computing interface model of the future. Undoubtedly, we are closer than ever to achieving the technical prowess voice needs to be fully functional.

Performance

The most important factor for adoption is the flawless performance of these interfaces. Voice recognition systems are often hosted by third-parties on other networks, so voice-driven applications must therefore send data to one of these providers, get a response, and then process it. As most of these transactions lack visibility end-to-end, delivering results to the user in less than 10 seconds seems quite a high service level.

Meeting this user response time requirement will rest on the ability to trace transactions and to tie the user request through the dependent systems and APIs. An interesting example is the major success of Amazon’s Alexa voice services. Amazon’s first voice recognition enabling device, the Echo, is ranked #2 in electronics in Amazon’s store today (June 2016), even after 20 months. In this short amount of time, there have been over 1000 integrations, also known as “skills”, added to the device. The replacement of existing interfaces has been some of the most impressive “skills” up to date. These are useful apps from reference lookups, news and stocks, home automation, travel, ordering goods and services, and of course, personal and social data.

Among the most popular skills are Capital One’s offerings. Capital One, an American private finance company, has a dedicated mini-site focused on their Alexa interface. Capital One is one of the few ‘traditional’ elite companies that recognise their customers’ needs are shifting and are embracing the imperative to innovate and leverage new technologies to meet these needs. Capital One is establishing itself as a leader on the matter. They’re paving the way with their efforts, example and important contributions to open source technologies.

Though, coming up with new interaction schemes brings loads of challenges when it comes to integrating existing backend systems to new API driven-functionality, such as those required by Alexa. To be able to effectively troubleshoot and ensure a flawless user experience, proper end-to-end visibility across multiple systems and technologies is crucial. The 10-second result delivery threshold seems ambitious given the complexity of the systems involved. However, as the traditional web has shown, as consumers adopt and grow comfortable with new technologies, the bar tends to quickly lift higher — never in the opposite direction.

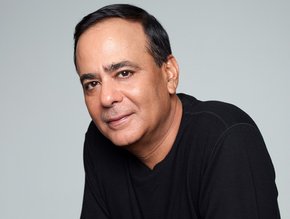

Andrew Brockfield is Australia & New Zealand Country Manager at AppDynamics.

Business Review Australia's January issue is now live.

Follow @BizReviewAU and @MrNLon on Twitter.

- Banking top industry, Google top employer – Singapore talentLeadership & Strategy

- Bain, Google, Temasek report 2021: The SEA Digital DecadeTechnology

- Google and Facebook Subsea Cable for South-east Asia ProjectTechnology

- IAS partners with Google to develop the first automated tagTechnology